AN OVERVIEW

Waiting in long line-ups on campus is commonplace for UBC students. Long queues at food vendors and bus stops create discontent and make it difficult for students to estimate how long a particular activity will take. To solve this problem, we decided to create a tool that can help users anticipate wait times and crowding. To achieve this, we tested different machine learning models on video feed to output real-time queueing data to users through a user interface. This solution has numerous smart city applications in buildings, transportation, and traffic management and will positively impact the student experience at UBC Vancouver. You can read more about the idea and its benefits in our proposal below.

If you want to take a look at the source code, you can find it on GitHub here.

This project is still in the fine-tuning phase, but we have been able to achieve some pretty cool results!

PROCESS

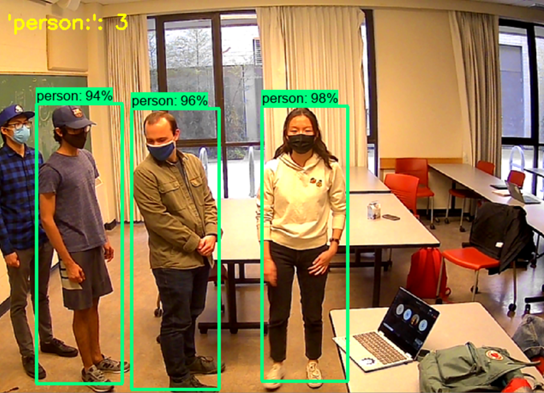

Our first attempt at developing a solution was applying the YOLOv4 model for Object Detection. However, this model did not have the capability of counting people standing in line. Therefore, we decided to use pretrained TensorFlow Counting API to track objects and then count pedestrians. This ensures that people queueing won’t be double counted if they move away from their original location. With this model, we define a line called the region of interest (ROI) in the video frame and people are counted when they cross the ROI. Next, we gathered testing data (e.g., videos of people queueing) from different online sources and ran the model on each. By adjusting the ROI variable and other functions, we were able to count people passing through different areas in a video frame. Given this promising performance, we tested the model on real-time footage of UBC Smart City team members. We uploaded the code to a Nvidia Jetson Nano SBC (single-board computer) and using an attached webcam module, we were able to capture and process real-time information. You can see the results from this test below.

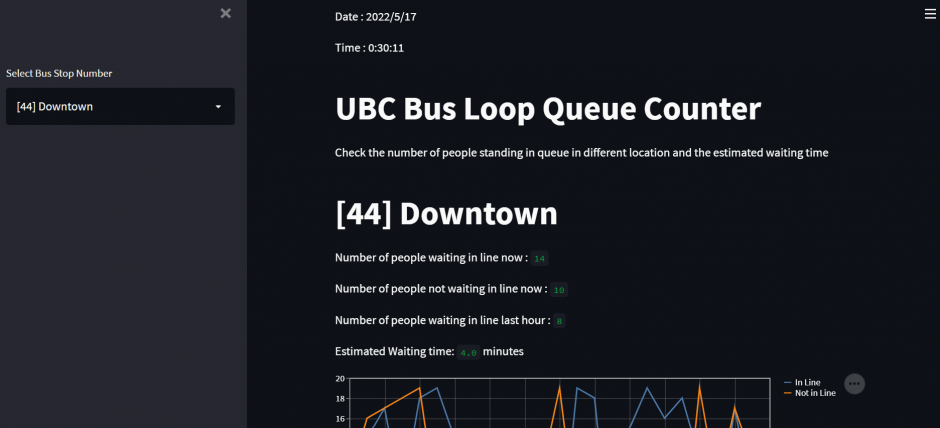

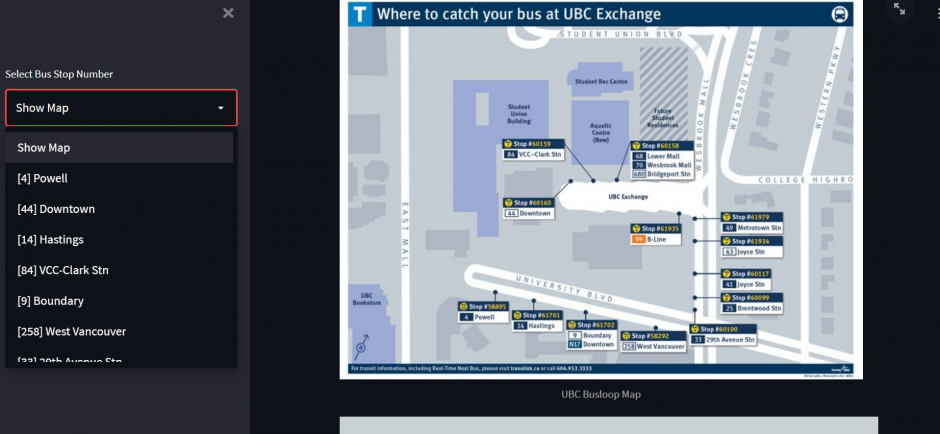

We also created a potential user interface for the app. We built the UI web app using the Stream Lit library, a reliable framework to visualize and represent ML data. Waiting times can be predicted from the queue length with a machine learning regression model that uses data from previous days.

NEXT STEPS

This model had trouble with videos where people are “stacked” upon each other (see image below) and in unexpected angles. The model’s ability to detect the right number of people was also highly dependent on the specified ROI position.

Therefore, a key next step for the team is applying a different model – YOLOv5 Deep Sort Tracking – integrated with a motion detection model to improve on our current results.

Gaining approval from UBC and TransLink to set up this device for testing at a bus stop was not feasible within our desired timeframe. Therefore, we contacted private vendors on campus, including McDonald’s and Starbucks, for permission to track queue lengths at their location for research purposes. If and when we gain approval for testing, we would proceed by fine-tuning our model and determining the optimal camera angle and location at each site.

LEARNING OUTCOMES

All in all, this project benefitted team members in the following ways:

- As our first machine learning project, we learned a lot about object detection and tracking algorithms.

- As our first time trying to market and deploy an actual device for testing, we learned what it takes to create hands-on projects with tangible benefits.

- We developed good coding practices to ensure that this project can be picked up by future team members for continued improvement.

CONCLUSION

While we were not able to set-up this device for testing and deployment by the end of the school year, we accomplished a lot – from ideation, to software and hardware development, to the first steps of implementation.

In the future, we can further iterate and improve on our ML model, UI, and hardware setup. At the end of day, this project was a great learning experience and provided exposure to machine learning concepts for our team members. We established a strong legacy project for next year’s team to continue if they choose.